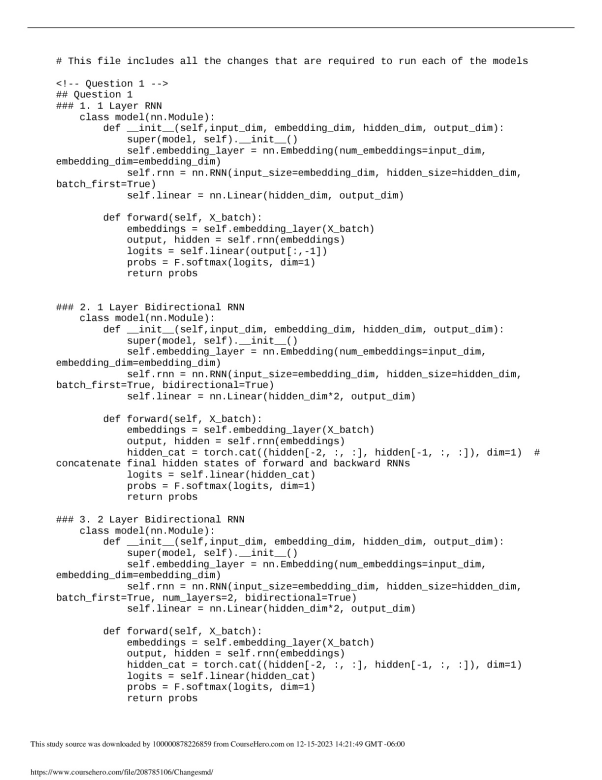

This file includes all the changes that are required to run each of the models

<!-- Question 1 -->

## Question 1

### 1. 1 Layer RNN

class model(nn.Module):

def __init__(self,input_dim, embedding_dim, hidden_dim, output_dim):

super(model, self).__init__()

self.embedding_layer = nn.Embedding(num_embeddings=input_dim,

embedding_dim=embedding_dim)

self.rnn = nn.RNN(input_size=embedding_dim, hidden_size=hidden_dim,

batch_first=True)

self.linear = nn.Linear(hidden_dim, output_dim)

def forward(self, X_batch):

embeddings = self.embedding_layer(X_batch)

output, hidden = self.rnn(embeddings)

logits = self.linear(output[:,-1])

probs = F.softmax(logits, dim=1)

return probs

### 2. 1 Layer Bidirectional RNN

class model(nn.Module):

def __init__(self,input_dim, embedding_dim, hidden_dim, output_dim):

super(model, self).__init__()

self.embedding_layer = nn.Embedding(num_embeddings=input_dim,

embedding_dim=embedding_dim)

self.rnn = nn.RNN(input_size=embedding_dim, hidden_size=hidden_dim,

batch_first=True, bidirectional=True)

self.linear = nn.Linear(hidden_dim*2, output_dim)

def forward(self, X_batch):

embeddings = self.embedding_layer(X_batch)

output, hidden = self.rnn(embeddings)

hidden_cat = torch.cat((hidden[-2, :, :], hidden[-1, :, :]), dim=1) #

concatenate final hidden states of forward and backward RNNs

logits = self.linear(hidden_cat)

probs = F.softmax(logits, dim=1)

return probs

### 3. 2 Layer Bidirectional RNN

class model(nn.Module):

def __init__(self,input_dim, embedding_dim, hidden_dim, output_dim):

super(model, self).__init__()

self.embedding_layer = nn.Embedding(num_embeddings=input_dim,

embedding_dim=embedding_dim)

self.rnn = nn.RNN(input_size=embedding_dim, hidden_size=hidden_dim,

batch_first=True, num_layers=2, bidirectional=True)

self.linear = nn.Linear(hidden_dim*2, output_dim)

def forward(self, X_batch):

embeddings = self.embedding_layer(X_batch)

output, hidden = self.rnn(embeddings)

hidden_cat = torch.cat((hidden[-2, :, :], hidden[-1, :, :]), dim=1)

logits = self.linear(hidden_cat)

probs = F.softmax(logits, dim=1)

return probs

-preview.jpeg)

-preview.jpeg)

-preview.jpeg)