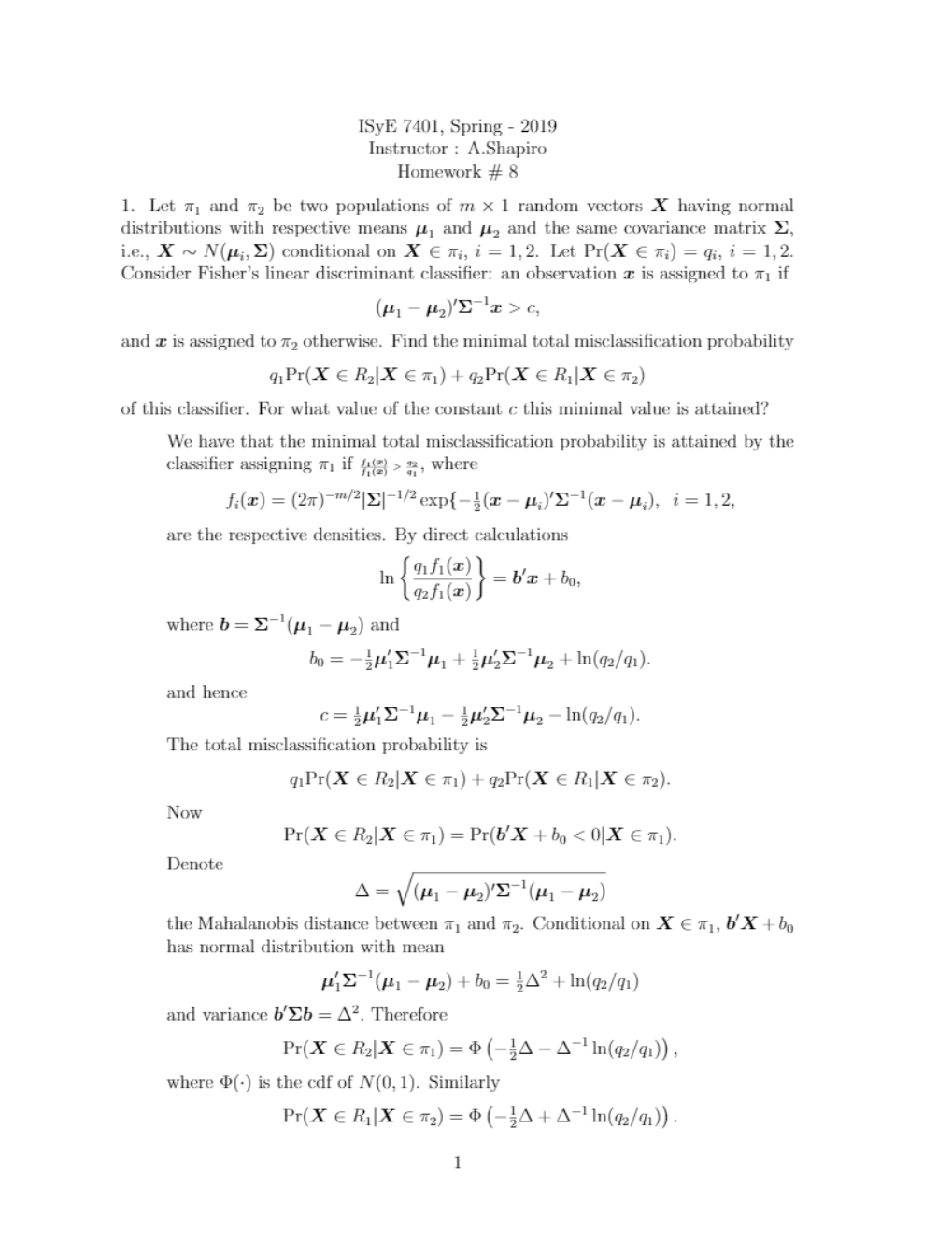

ISyE 7401, Spring - 2019Instructor : A.ShapiroHomework # 81. Let π1 and π2 be two populations of m × 1 random vectors X having normaldistributions with respective means µ1 and µ2 and the same covariance matrix Σ,i.e., X ∼ N(µi; Σ) conditional on X 2 πi, i = 1; 2. Let Pr(X 2 πi) = qi, i = 1; 2.Consider Fisher’s linear discriminant classifier: an observation x is assigned to π1 if(µ1

...[Show More]

ISyE 7401, Spring - 2019

Instructor : A.Shapiro

Homework # 8

1. Let π1 and π2 be two populations of m × 1 random vectors X having normal

distributions with respective means µ1 and µ2 and the same covariance matrix Σ,

i.e., X ∼ N(µi; Σ) conditional on X 2 πi, i = 1; 2. Let Pr(X 2 πi) = qi, i = 1; 2.

Consider Fisher’s linear discriminant classifier: an observation x is assigned to π1 if

(µ1 - µ2)0Σ-1x > c;

and x is assigned to π2 otherwise. Find the minimal total misclassification probability

q1Pr(X 2 R2jX 2 π1) + q2Pr(X 2 R1jX 2 π2)

of this classifier. For what value of the constant c this minimal value is attained?

We have that the minimal total misclassification probability is attained by the

classifier assigning π1 if f f1 1( (x x) ) > q q2 1, where

fi(x) = (2π)-m=2jΣj-1=2 expf-1 2(x - µi)0Σ-1(x - µi); i = 1; 2;

are the respective densities. By direct calculations

ln �q q1 2f f1 1( (x x) )� = b0x + b0;

where b = Σ-1(µ1 - µ2) and

b0 = -12µ0 1Σ-1µ1 + 1 2µ0 2Σ-1µ2 + ln(q2=q1):

and hence

c = 1

2µ0 1Σ-1µ1 - 1 2µ0 2Σ-1µ2 - ln(q2=q1):

The total misclassification probability is

q1Pr(X 2 R2jX 2 π1) + q2Pr(X 2 R1jX 2 π2):

Now

Pr(X 2 R2jX 2 π1) = Pr(b0X + b0 < 0jX 2 π1):

Denote

∆ = q(µ1 - µ2)0Σ-1(µ1 - µ2)

the Mahalanobis distance between π1 and π2. Conditional on X 2 π1, b0X +b0

has normal distribution with mean

µ0 1Σ-1(µ1 - µ2) + b0 = 1 2∆2 + ln(q2=q1)

and variance b0Σb = ∆2: Therefore

Pr(X 2 R2jX 2 π1) = Φ -1 2∆ - ∆-1 ln(q2=q1)� ;

where Φ(·) is the cdf of N(0; 1). Similarly

Pr(X 2 R1jX 2 π2) = Φ -12∆ + ∆-1 ln(q2=q1)� :

1

[Show Less]

-by-Gary-Donell-SOLUTIONS-MANUAL-preview.jpeg)

-by-Gary-Donell-INSTRUCTOR’S-SOLUTIONS-MANUAL-preview.jpeg)